2025 One Hertz Challenge: Estimating Pi With An Arduino Nano R4

Humanity pretty much has Pi figured out at this point. We’ve calculated it many times over and are confident about what it is down to many, many decimal places. However, if you fancy estimating it with some electronic assistance, you might find this project from [Roni Bandini] interesting.

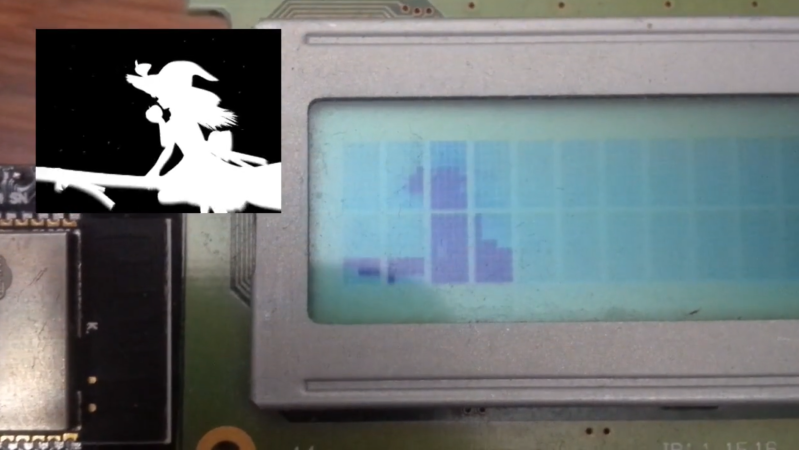

[Roni] programmed an Arduino Nano R4 to estimate Pi using the Monte Carlo method. For this specific case, it involves drawing a circle inscribed inside a square. Points are then randomly scattered inside the square, and checked to see if they lie inside or outside the circle based on their position and distance of the circle’s outline from the center point of the square. By taking the ratio of the points inside the circle to the total number of points, you get an approximation of the ratio of the square and circle’s areas, which is equal to Pi/4. Thus, multiply the ratio by 4, and you’ve got your approximation of Pi.

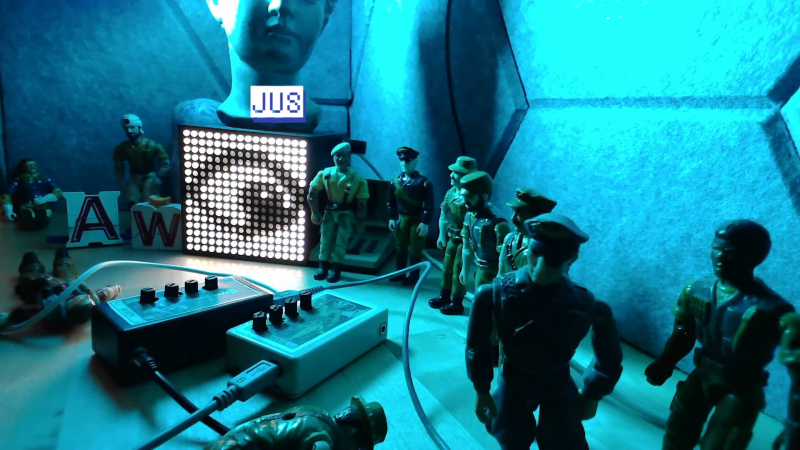

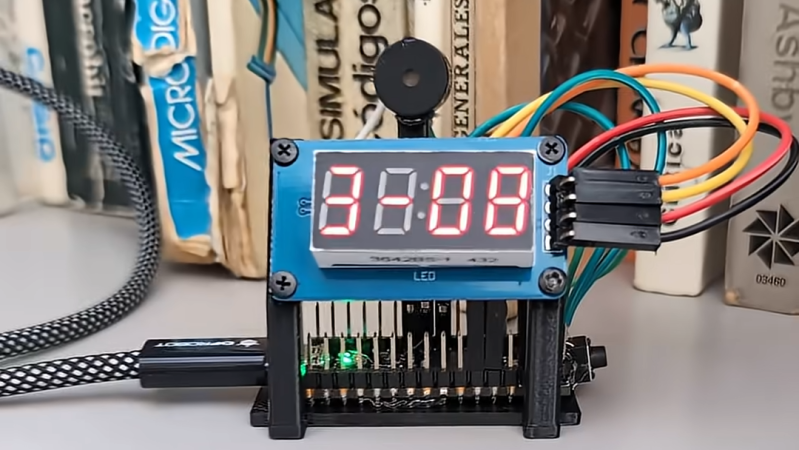

[Roni] coded a program to run the Monte Carlo simulation on the Arduino Nano R4, taking advantage of the mathematical benefits of its onboard Floating Point Unit. It generates 100 new samples for the Monte Carlo approximation every second, improving the estimation of pi as it goes. It then displays the result on a 7-segment display, and beeps as it goes. [Roni] readily admits the project is a little too close in appearance to a classic Hollywood bomb.

We’ve seen some other neat Pi-calculating projects before, too.