Building a Camera Slider Instead of Buying One Goes Awry

[TheHyperFix] had a problem. He’d spied a brilliant camera slider, but didn’t want to lay out big money to acquire it. The natural solution? Build one! Only, life is seldom so straightforward.

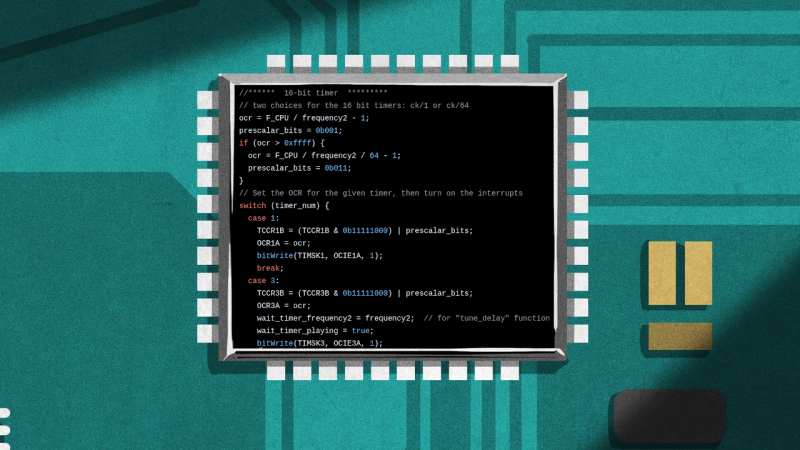

The plan was straightforward – take an old broken 3D printer, and repurpose its parts to make a camera slider instead. The build started with a aluminium extrusion, some V-slot wheels, and a 3D printed platform to hold the camera. Moving the platform was done via a belt drive, using the stepper motors and some software to tell the original printer controller what to do.

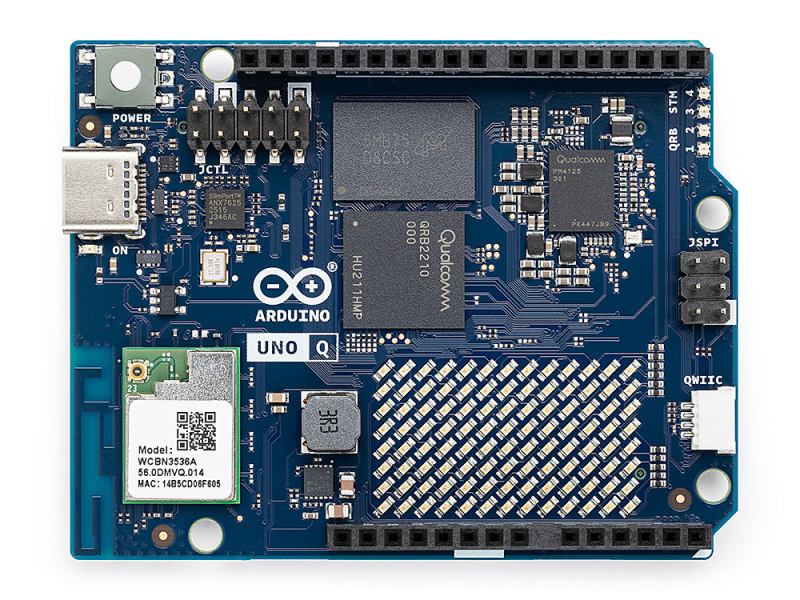

Unfortunately, the early experiments failed when the controller blew up under load. An Arduino was subbed in with a CNC shield, which got things back on track, and [TheHyperFix] had a somewhat functional slider with relatively jerky movement. A tough iterative design process ensued to work out problems with bearings and the Arduino’s pulse limit, among others.

As it stands, the slider is semi-functional, but it’s not quite well behaved enough to use for professional shooting. Still, for a first attempt at electronics prototyping, we think [TheHyperFix] did a pretty solid job. It might not be all there yet, but it’s well on the way, and a great deal was learned in the process.

If you’re trying to build a camera slider in a hurry, you might like to try recreating one of the builds we’ve featured before. Video after the break.