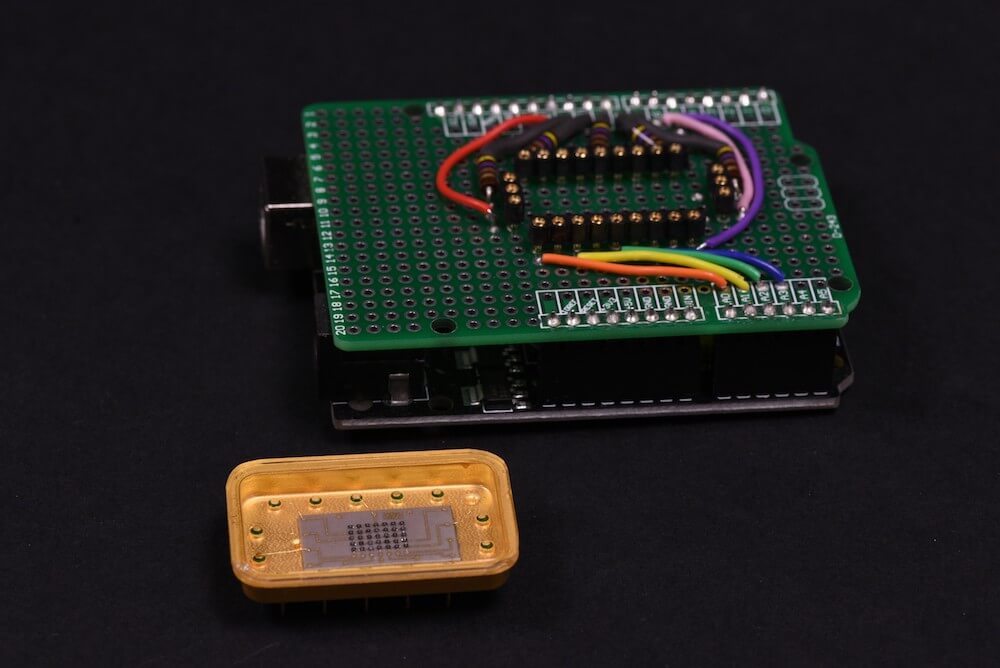

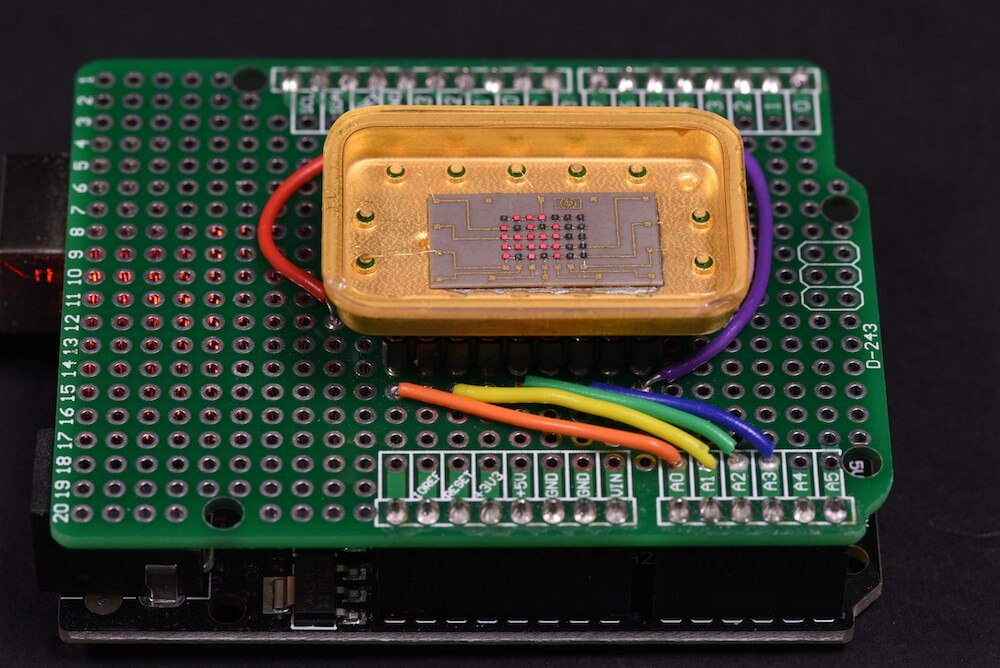

Second project based on Arduino Uno and FFT code. (First one : Project 1.)

Short description ( EDITED: Project stopped. I lost my inspiration and working on visual recognition now, I decided to publish a code, so someone else could find it useful and continue research. ).

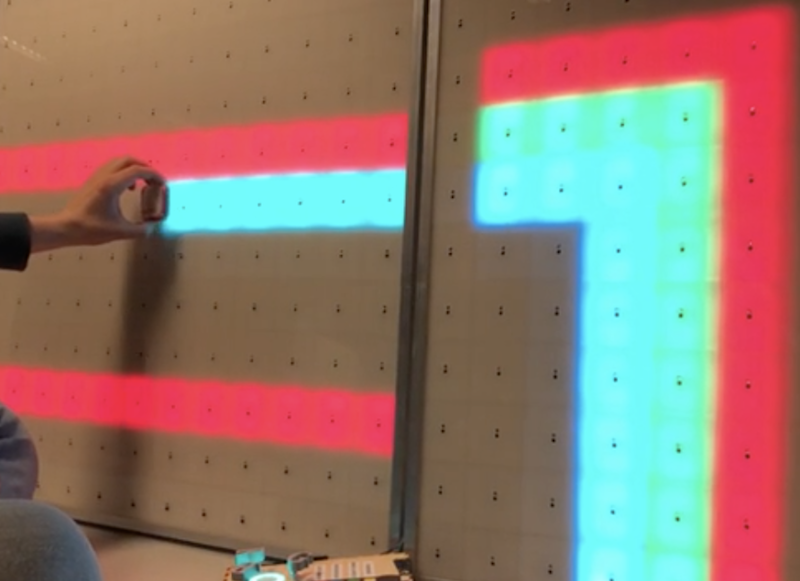

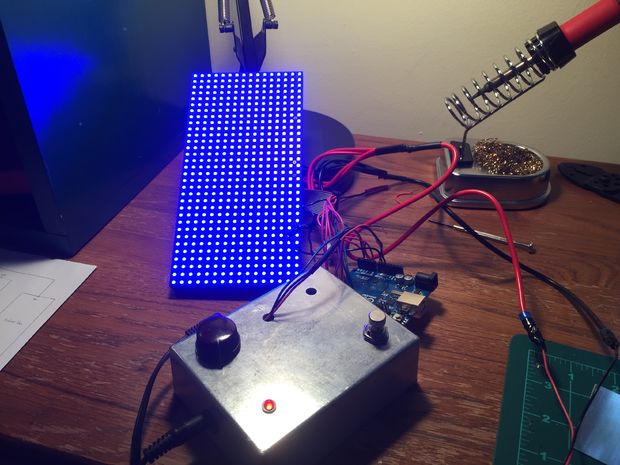

Main array size is 1024 bytes, real / imaginary 512 / 512, output 256 bins (only half real part, other half is mirror). Sampling rate 4 kHz, upper note B6 (1975.5 Hz) on yellow line – right side led, lower note C3 (130.8 Hz) on red line – left side led. Frequency resolution is approximately 7.8 Hz per bin. Processing and sampling are running in parallel. Sampling array size is 256. After data captured (256 x 0.25 msec = 64 msec) they transfered to processing array 1024, missing 256 real samples is “zero padded”, and sampling continue w/o interruption. I did zero padding on a purpose to get “response time” of led matrix as fast as possible, so real-time 1/16 notes could be visually distinguished the same time, as they played.

After computational cycle is completed (~36 msec), main program executes “cognitive core function” to differentiate between notes / tones, that have to be displayed on LED matrix.

In order to minimize error rate of this process, Masking Shadow Theory (MST) was developed. Masking shadow for each note is calculated in several steps and result is compared with notes magnitude in the cycle. If magnitude is less than shadow, than led corresponding to this note wouldn’t lights up. There are five steps for now, but as I say, work in progress.

Step 1 (masking shadow 0): noise floor, which is common for all notes.

Step 2 (masking shadow 1): shadow from neighboring notes, that includes 8 notes on left and right side, in inverse proportion to their distances.

Step 3 (masking shadow 2): shadow from the note, which is located 1 octave below. Or in other words, cross check if current bins value isn’t second harmonic from sounding note 1 octave below it.

Step 4 (masking shadow 3): similar to step 3, the only difference is, cross check if current bin (note) isn’t third harmonic from sounding note below it.

Step 5 (masking shadow 5): similar to step 3 and 4, cross check if current note isn’t fifth harmonic from sounding note below it.

Masking shadow is multiplied by notes specific coefficients after steps 3 – 5, to accommodate significantly richer spectral content for lower octaves. Formula for calculation of the coefficients, is the trickiest part of all project. What I’ve discovered, coefficient not just varying between notes / tones, they dynamically varying during “life-time” of the tone.

25 Sept. 2011

There are two variables have been considered: speed, octave range. Third one, “not technical” – is a price for the project.

Octave range is defined by RAM memory available on chip. 2K on UNO. As maximum processing array size is must be power of 2 ( FFT Radix-2 ), array couldn’t be more than 1024 bytes, next value – 2048 is size of all RAM, that obviously couldn’t be taken. Size of array defines maximum quantity of frequency bins at the output 1024 / 4 = 256. Divided by 4 as there is real / imaginary part, and only half real part is present data. What is interesting, that musical octave is nothing else than doubling of the tones frequency, so it follows binary arithmetic rules… If I will count from high side, the upper octave would occupied half of all 256 bins, from bin 256 to bin 128. Simply because frequency / musical tone spaced logarithmically (LOG_2), and bins spaced equally. Next octave takes half what left over, from bin 128 to bin 64. Third octave 64 to 32, and fourth 32 to 16. Can I go more down ? No. There are 12 notes in each octave. It means, that after fourth octave counting down , I arrived to location where bins and tones spaced almost in sequence, bin 16 – C3, bin 17 – C3#, bin 18 – D3. Well there is four more ( 15, 14, 13, and 12 ), but it doesn’t change much, as it only 1/3 of octave and only would complicate multiplexing LED display.

This is why variable octave range = 4. Summing up, increasing octave range by 1 ( to 5 octaves ) would require double memory size (2K processing array, still possible with chip 4K), by 2 ( to 6 octaves ) – four times more memory (4K processing array).

Arduino mega board has 8K RAM, would it be better to design project with it? In first, it cost more money. In second, it has the same CPU performance, and as you will see below, to have more memory w/o faster CPU doesn’t make any sense. CPU wouldn’t be able to process bigger volume of data in time.

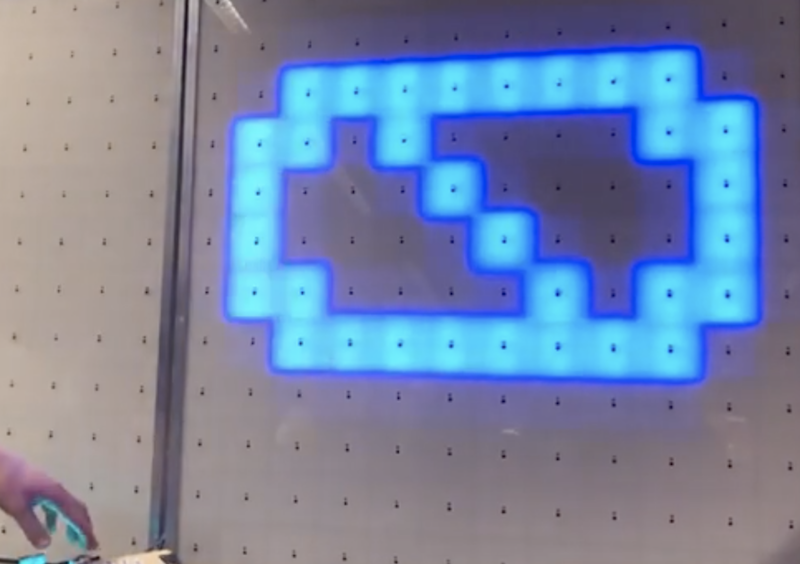

Now lets have a look at speed variable. Following math (and design itself), is greatly depends on it. In order to get at least 1/16 note to be visually “alive”, all cycle ( sampling , pre-processing, FFT, post-processing ) has to be completed for less than 64 msec. My impression is, when timing a little bit longer, LED display looks like it shows something, that was played last Saturday night. Invisible real-time connection between “light” and “music” become broken.

Octave range variable ( 4 octave to be specific ), especially low notes starting from C3, begging for 8 Hz resolution, as it equals to distance between C3 and C3#. And consequently, for 128 msec sampling frame duration. So, there is a contradiction. To solved this , zero padding was introduced, which help to keep sampling window down to 64 msec, the same time frequency resolution not very far from 8 Hz. To make real-time life show, sampling must continue w/o interruption. Even more, it has to be “overlapped”, as pre-processing (windowing) would cut off beginning and ending of the sampling pull. All three other functions ( FFT, pre- and post-processing ) have to go in parallel and must be completed in the same time frame 64 msec. Arduino platform has 8-bit microprocessor, with low horse power engine under hood. This is why 8-bit math was selected instead of 16-bits ( which would save me a lot of troubles ). Troubles, I’m talking about, are very low dynamic range when integer math and 8 – bit comes together in FFT. Integer math, which gives nice time performance, puts really hard constrain on dynamic range, just because it performs “scaling” before and after every “butterfly”. And every scaling procedure brings in rounding error, which grows enormously, as there are 2304 butterfly (9216 round operation) for N = 512 FFT. Special attention must be payed, to keep rounding error under control, the same time not to increase calculation time too much or integer math would not make any sense. What I find out, there is an excellent algorithm to make “symmetrical” 1/2 bit rounding, but it almost doubles calculation FFT timing, which I obviously, could not afford. So, I choose other path, to increase dynamic range. Compression algorithm on the input data. The easiest way to do it, is “clipping”. Set couple lines in the code:

if ( x[i] > 127 ) x[i] = 127;

if ( x[i] < -127 ) x[i] = -127;

and all good. Not quite. It will do a great job for any other signal (vibration from accelerometer for example), except music…..

Clipping generates a very high level of harmonics, and ones again , it couldn’t be afford, as it just undermine basic idea of the project – MUSICAL note recognition.

Summary: Scaling extends dynamic range of integer FFT on 24 dB ( 4 bits ).

8- bit FFT dynamic range is +36 dB;

scaling +24 dB;

noise - 3 dB;

———————————————————–

Overall 57 dB.

Link to download a scketch:

Arduino_Musical_Notes_Recognition