Arduino Synthesizer Uses Modified Slide Pots

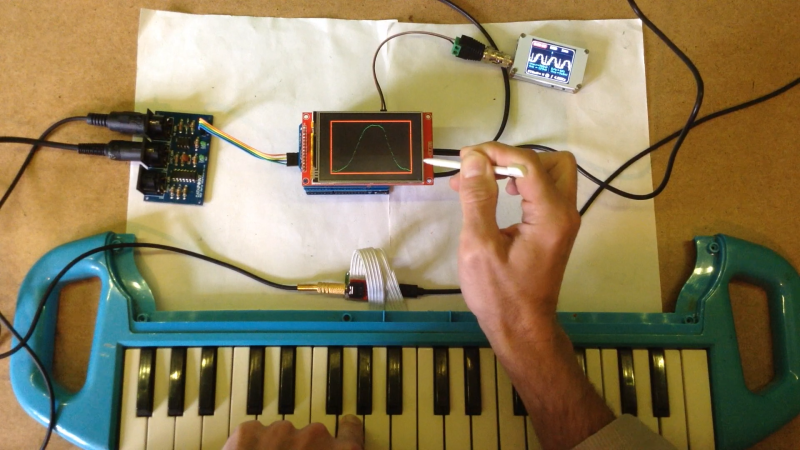

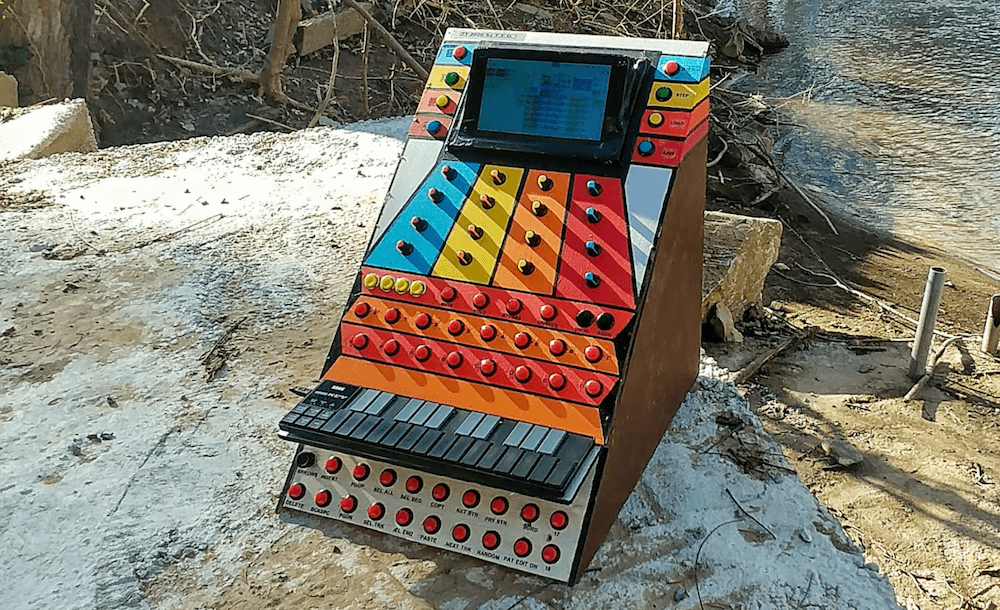

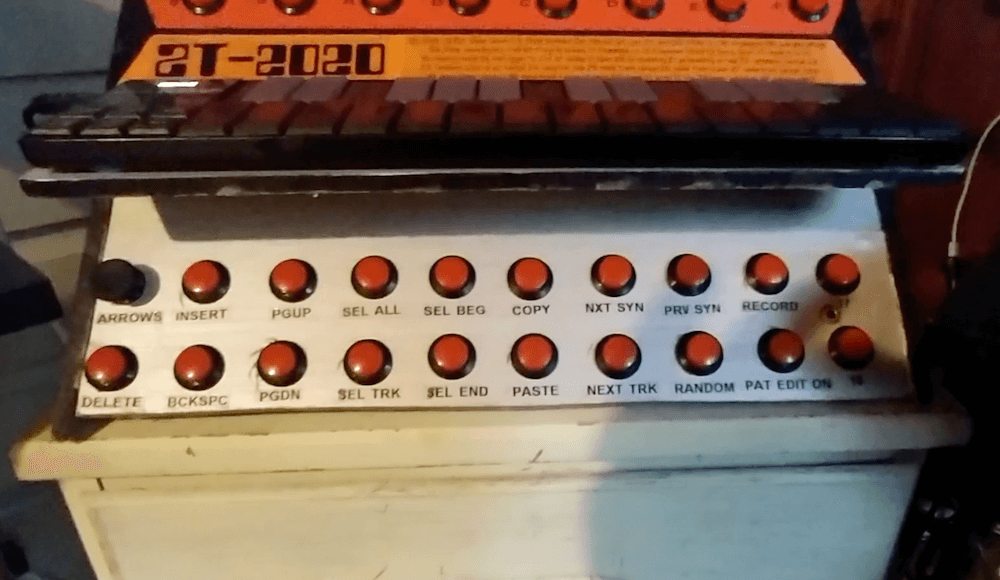

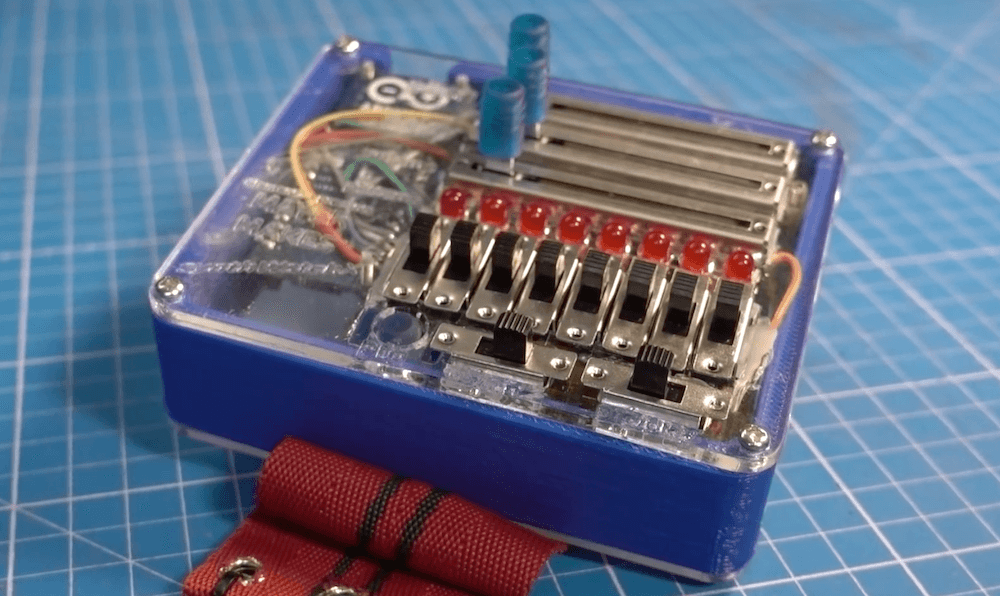

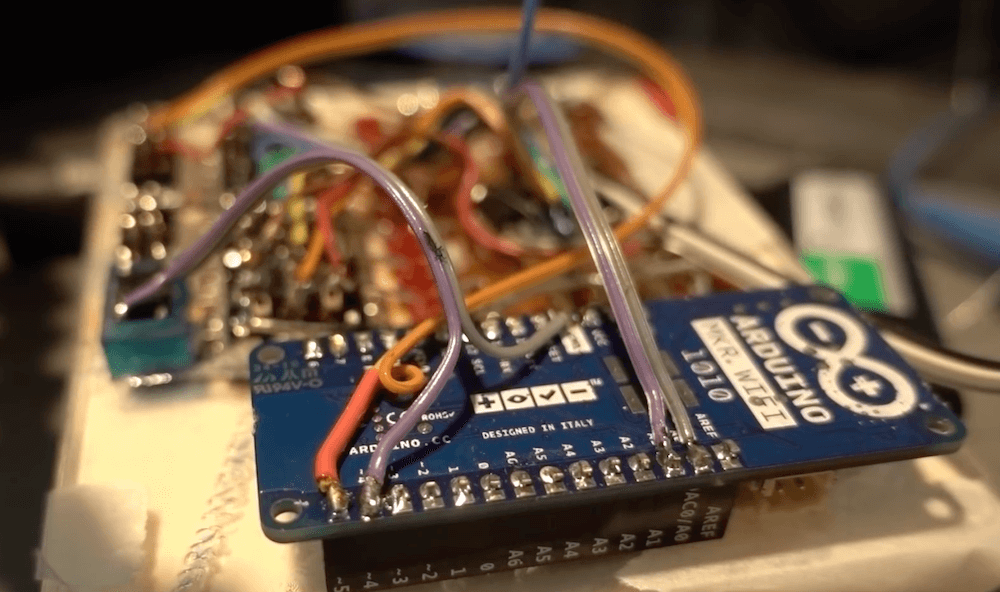

There comes a point in every Arduino’s life where, if it’s lucky, it becomes a permanent fixture in a project. We can’t think of too many better forever homes for an Arduino than inside of a 3D-printed synthesizer such as this 17-key number by [ignargomez] et al.

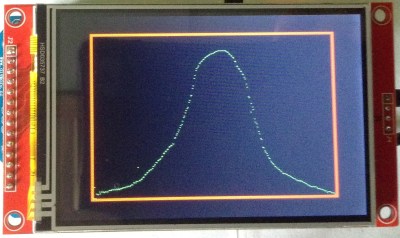

While there are myriad ways to synthesizer, this one uses the tried-and-true method of FM synthesis courtesy of an Arduino Nano R3. In addition to the 17 keys, there are eight potentiometers here — four are used for FM synthesis control, and the other four are dedicated to attack/delay/sustain/release (ADSR) control of the sound envelope.

One of the interesting things here is that [ignargomez] and their team were short a few regular pots and modified a couple of slide pots for circular use — we wish there was more information on that. As a result, the 3D printed enclosure underwent several iterations. Be sure to check out the brief demo after the break.

Don’t have any spare Arduinos? The BBC Micro:bit likes to make noise, too.