This animatronic device turns speech into sign language

Using a couple Arduinos, a team of Makers at a recent McHacks 24-hour hackathon developed a speech-to-sign language automaton.

Alex Foley, along with Clive Chan, Colin Daly, and Wilson Wu, wanted to make a tool to help with translation between oral and sign languages. What they came up with was an amazing animatronic setup that can listen to speech via a computer interface, and then translate it into sign language.

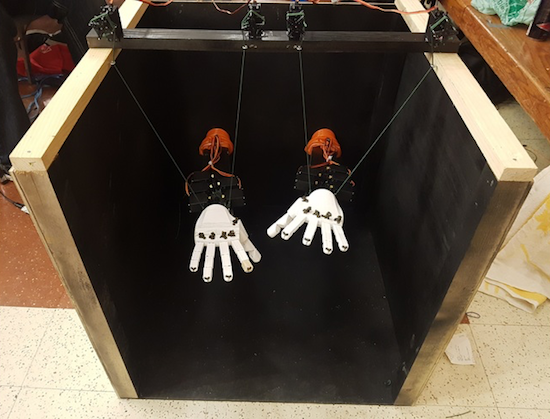

This device takes the form of two 3D-printed hands, which are controlled by servos and a pair Arduino Unos. In addition to speech translation, the setup can sense hand motions using Leap Motion’s API, allowing it to mirror a person’s gestures.

You can read about the development process in Foley’s Medium write-up, including their first attempt at control using a single Mega board.